Chapter 3: Phonetics

3.1 Modality

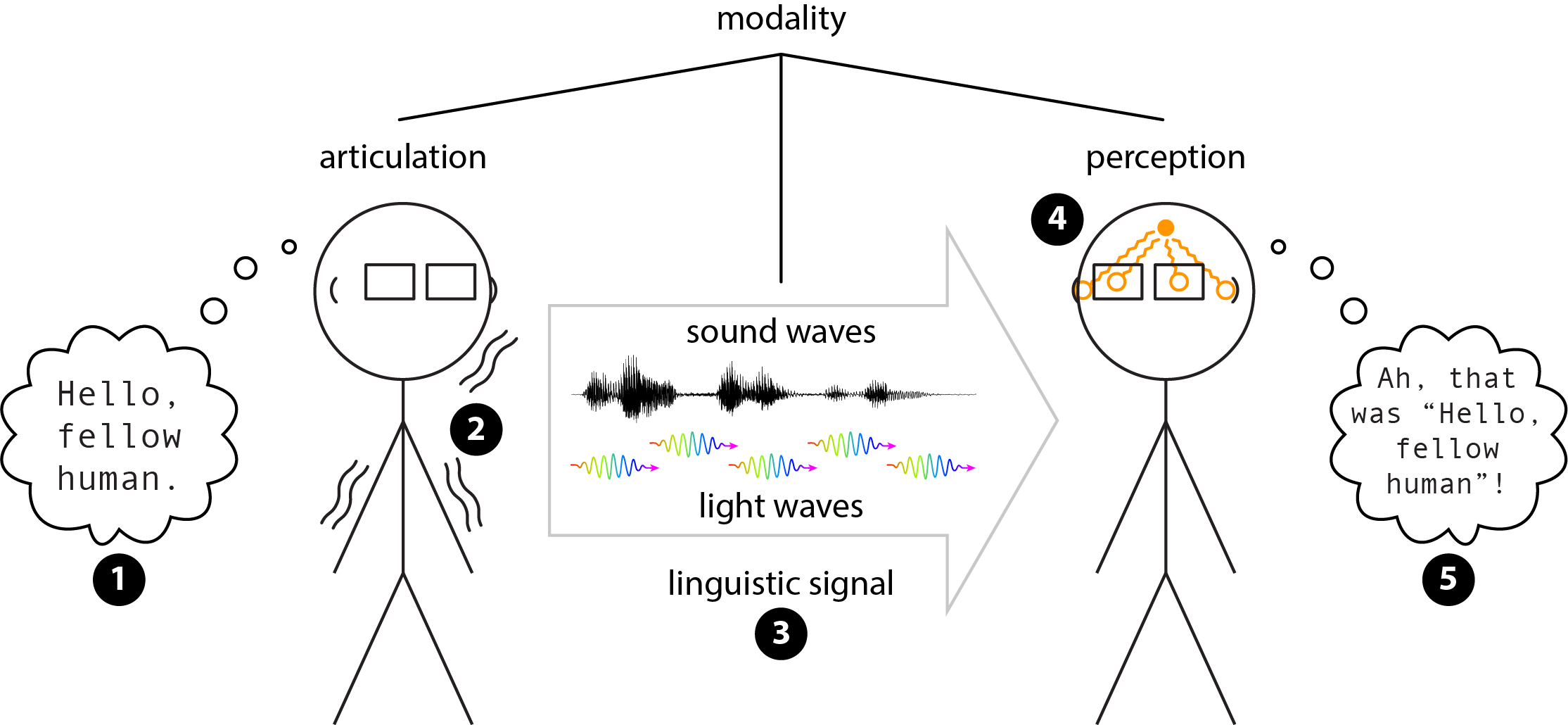

The major components of communication

An act of communication between two people typically begins with one person constructing some intended message in their mind (step ❶ in Figure 3.1). This person can then give that message physical reality through various movements and configurations of their body parts, called articulation (step ❷). The physical linguistic signal (step ❸) can come in various forms, such as sound waves (for spoken languages) or light waves (for signed languages). The linguistic signal is then received, sensed, and processed by another person’s perception (step ❹), allowing them to reconstruct the intended message (step ❺). The entire chain of physical reality, from articulation to perception, is called the modality of the language.

Spoken and signed languages

The modality of spoken languages, such as English and Cantonese, is vocal, because they are articulated with the vocal tract; acoustic, because they are transmitted by sound waves; and auditory, because they are received and processed by the auditory system. This modality is often shortened to vocal-auditory, leaving the acoustic nature of the signal implied, since that is the ordinary input to the auditory system.

Signed languages, such as American Sign Language and Chinese Sign Language, also have a modality: they are manual, because they are articulated by the hands and arms (though most of the rest of the body can be used, too, so this component of modality might best be called corporeal); photic, because they are transmitted by light waves; and visual, because they are received and processed by the visual system. This modality is often shortened to manual-visual.

Other modalities are also possible, but full discussion is beyond the scope of this textbook. One notable example is the manual-somatic modality of tactile signing, in which linguistic signals are articulated primarily by the hands and are perceived by the somatosensory system, which is responsible for sensing various physical phenomena on the skin, such as pressure and movement. This modality can be used for deafblind people to communicate, often by adapting aspects of an existing signed language in such a way that the signs are felt rather than seen. Some examples of such languages include tactile Italian Sign Language (Checchetto et al. 2018) and a tactile version of American Sign Language called Protactile (Edwards and Brentari 2020).

Finally, it is important to note that actual instances of communication are often multimodal, with language users making use of the resources of more than one modality at a time (Perniss 2018, Holler and Levinson 2019, Henner and Robinson 2023). For example, spoken language is often accompanied by various kinds of co-speech behaviours, such as shrugging, facial expressions, and hand gestures, which are used for many meaningful functions in the linguistic signal: emphasis, emotion, attitude, shifting topics, taking turns in a conversation, etc. (Hinnell 2020; also see Sections 8.7 and 10.4 for discussion of some related issues and examples). A full analysis of how language works must ultimately take into account its multimodal nature and the complexity and flexibility of how humans do language.

Terminological note: Signed languages are sometimes called sign languages. Both terms are generally acceptable, so you may encounter either one in linguistics writing. Sign languages has long been the more common term, but signed languages has recently been gaining popularity among deaf scholars.

Another piece of relevant terminology that is in flux is the long-standing distinction in capitalization between uppercase Deaf (a sociocultural identity) and lowercase deaf (a physiological status). However, this distinction has been argued to contribute to elitist gatekeeping within deaf communities, so many deaf people have pushed to eliminate this distinction (Kusters et al. 2017, Pudans-Smith et al. 2019).

In this textbook, we follow these prevailing modern trends by using signed languages and by not using the Deaf/deaf distinction. However, the alternatives are still widespread in linguistics writing, so you may still encounter them.

For these issues, it is important to proceed with caution and follow the lead of anyone more knowledgeable than you, especially if they are deaf. If you are uncertain what usage is appropriate in a given situation with a given deaf person, ask what they prefer.

The study of modality

Because spoken languages have long been the default object of study in linguistics, and because the vocal-auditory modality is centred on sound, the study of linguistic modality is called phonetics, a term derived from the Ancient Greek root φωνή (phōnḗ) ‘sound, voice’. However, all languages have many underlying similarities, so linguists have long used many of the same terms to describe properties of different modalities, even when the etymology is specific to spoken languages. This includes the term phonetics, which is now commonly used to refer to the study of linguistic modality in general, not just the vocal-auditory modality.

This is an important reminder that the etymology of a word may give you hints to its meaning, but it does not determine its meaning. Instead, the meaning of a word is determined by how people actually use that word (for more discussion of meaning, check out Chapter 7 on semantics). This usage-based meaning can diverge and even contradict historical etymology, especially in scientific fields where our knowledge of the world is constantly evolving.

An example of such a divergence between etymology and current usage for a scientific term can be seen with the English word atom, which comes from the Ancient Greek ἄτομος (átomos) ‘indivisible’. This term was used by Ancient Greek philosophers to represent their belief that atoms were the smallest building blocks of matter. However, more than 2000 years later, we discovered that atoms are in fact divisible, being made up of protons, neutrons, and electrons. Rather than rename atoms, we just kept the old name and accepted that its etymology was no longer an accurate representation of our current scientific knowledge. The same is true for the term phonetics.

However, be aware that many linguists still hold biased views about language and linguistics, and they often forget to include signed languages and other modalities when talking about phonetics, or even language in general. Some may even think signed languages cannot have phonetics at all. As linguists have become more knowledgeable about linguistic diversity and more sensitive to challenges faced by marginalized groups (such as deaf and deafblind people), there has been an ongoing shift towards increased inclusivity in how we talk about language. As with any such shift, some people will remain in the past, while others will be proactively part of the inevitable future.

In this chapter, we focus on articulatory phonetics, which is the study of how the body creates a linguistic signal. The other two major components of modality also have dedicated subfields of phonetics. Perceptual phonetics is the study of how the human body perceives and processes linguistic signals. We can also study the physical properties of the linguistic signal itself. For spoken languages, this is the field of acoustic phonetics, which studies linguistic sound waves. However, there is currently no comparable subfield of phonetics for signed languages, because the physical properties of light waves are not normally studied by linguists. Perceptual and acoustic phonetics are beyond the scope of this textbook.

Check your understanding

References

Checchetto, Alessandra, Carlo Geraci, Carlo Cecchetto, and Sandro Zucchi. 2018. The language instinct in extreme circumstances: The transition to tactile Italian Sign Language (LISt) by Deafblind signers. Glossa: A Journal of General Linguistics 3(1): 66.

Edwards, Terra, and Diane Brentari. 2020. Feeling Phonology: The conventionalization of phonology in protactile communities in the United States. Language 96(4): 819–840.

Henner, Jon and Octavian Robinson. 2023. Unsettling languages, unruly bodyminds: Imaging a Crip Linguistics. Journal of Critical Study of Communication & Disability 1(1): 7–37.

Hinnell, Jennifer. 2020. Language in the body: Multimodality in grammar and discourse. Doctoral dissertation, University of Alberta.

Holler, Judith, and Stephen C. Levinson. 2019. Multimodal language processing in human communication. Trends in Cognitive Sciences 23(8): 639–652.

Kusters, Annelies, Maartje De Meulder, and Dai O’Brien. 2017. Innovations in Deaf Studies: Critically mapping the field. In Innovations in Deaf Studies: The role of deaf scholars, ed. Annelies Kusters, Maartje De Meulder, and Dai O’Brien, Perspectives on Deafness, 1–56. Oxford: Oxford University Press.

Perniss, Pamela. 2018. Why we should study multimodal language. Frontiers in Psychology 9(1109): 1–5.

Pudans-Smith, Kimberly K., Katrina R. Cue, Ju-Lee A Wolsley, and M. Diane Clark. 2019. To Deaf or not to deaf: That is the question. Psychology 10(15): 2091–2114.